|

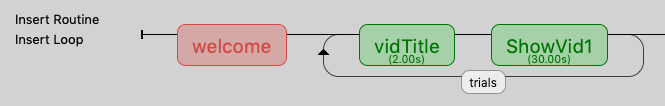

To do this, I’ve placed a loop around all the blocks, with this loop titled “blocknames” in the picture. The next step for me is to randomize the presentation of these blocks, with one block denoting one level of SOA/time interval (e.g +750ms). I have already randomized the trials within each block. Hence, in total, there are 7 blocks here (only 4 are pictured though). One set of fixation_cross and VA_750ms (for example) constitutes a block. I have 7 levels of time intervals/SOAs (plus minus 750, 250 and 500, and 0ms), resulting in me creating my experiment in such a manner (see attached picture). They are supposed to press “a” if the numerical information is the same and “l” if it’s different.Īpart from varying the format of the visual numerical stimuli I am presenting, I also intend to vary the stimulus onset asynchrony (SOA)/time interval between the two stimuli. After the presentation of the 2nd stimulus, participants are to respond if these two stimuli are conveying the same information or not. Both visual numerical information and auditory numerical information will be presented sequentially. The visually presented numerical information will be presented in three forms - Arabic numerals (e.g 5), number words (e.g five), and non-symbolic magnitude (a picture of 5 dots). If eventType=pl.STARTFIX or eventType=pl.FIXUPDATE or eventType=pl.I am currently doing a reaction time and accuracy task that involves comparing visually presented numerical information and auditory numerical information. Here is a portion of some code that uses the eyelink's most recent sample in the logic of the experimental program. Here is an example of sending a message from your python program to the eyelink ndMessage("TRIALID "+str(trialnum)) There are a number of built-in variables you can set, or you can add your own. You can talk to the eye tracker and do things like opening a file def eyeTrkOpenEDF (dfn,el):īlockLabel=(expWin,text="Press the space bar to begin drift correction",pos=, color="white", bold=True,alignHoriz="center",height=0.5) sp screen size, and cd is color depth, e.g. Calibrate EyetrackerĮl is the name of the eyetracker object initialized above. Go through the Eyelink forum and get pylink from there. Also, note that there is another python library called pylink that you can find on line. NB: the pl function comes from import pylink as pl. def eyeTrkInit (sp):Įl.sendCommand("screen_pixel_coords = 0 0 %d %d" %sp)Įl.sendMessage("DISPLAY_COORDS 0 0 %d %d" %sp)Įl.sendCommand("select_parser_configuration 0")Įl.sendCommand("scene_camera_gazemap = NO")Įl.sendCommand("pupil_size_diameter = %s"%("YES")) Sp refers to the tuple of screenx, screeny sizes. We have found it convenient to write some functions for talking to the Eyelink computer. #To set up connection with Eyelink II computer: NB: these commands are being typed into a terminal. Try simply with ip link and look for something similar. Note your ethernet will probably have a different name than enp4s0. If your experimental machine is turned on and plugged into a live Eyelink computer then on linux you have to first set your ethernet card up, and then set the default address that Eyelink uses (this also works for the Eyelink 1000 - they kept the same address).

Communication between experimental machine and eye tracker machineįirst, you have to establish communication with the eyelink. If none of the following makes any sense to you, don't worry about it, but maybe it will help others who are stumbling along the same path we are.

I have not used iohub, but we do use psychopy and an eyelink and therefore some of the following code may be of use to others who wish to invoke more direct communication.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed